“There’s no such thing as a strong strategy that prioritises everything at once.”

Wrapping up a year always makes me reflect on which of my annual goals were achieved (or missed). Compared to last year, I think I’ve managed to be more focussed in 2025. Overall, I’m pretty happy with my year. Some things done:

- Found Algorithmic Governance Foundation

- Have functional AGF website

- Advertise new AFG projects

- Acquire office for AGF co-work

- Acquire AGF co-director

- Acquire AGF volunteers and project leads

- Write code for contributing data cleaning on GitHub

- Aquire AGF funding (not a small feat!)

- Deploy INFLOW-AI temporal and spatial model

- Write paper for INFLOW-AI model

- Publish INFLOW-AI paper

- Publish INFLOW-AI dataset

- Write Early Action Protocal (EAP) for INFLOW-AI model

- Deploy INFLOW-AI spatial model

- Build automation to detect disaster risk for 510

- Acquire project to predict global conflict

- Acquire project to track conflict in Gaza

- Get volunteers to map displacement tents in the Gaza Strip

- Develop machine learning pipeline to detect tents in Gaza Strip

- Acquire project to track disease progression using LLMs

- Build model to track AMR policies using LLMs for client

- Build model to predict disolved oxygen in Andhra Pradesh

- Build left wing vote splitting model for Your Party

- Write policy breifings for Think7

- Promoting breifings at Kananaskis Summit

- Build website to host graphic videos from Gaza for activists

- Promote Rules as Code at the French Foreign Ministry

And for personal learning goals:

- Get into PhD program at Oxford

- Get PhD Funding

- Understand extreme value theory

- Learn 1,000 Chinese words

Sometimes I’m a bit hard on myself, but putting it all together makes me feel quite accomplished for the year.

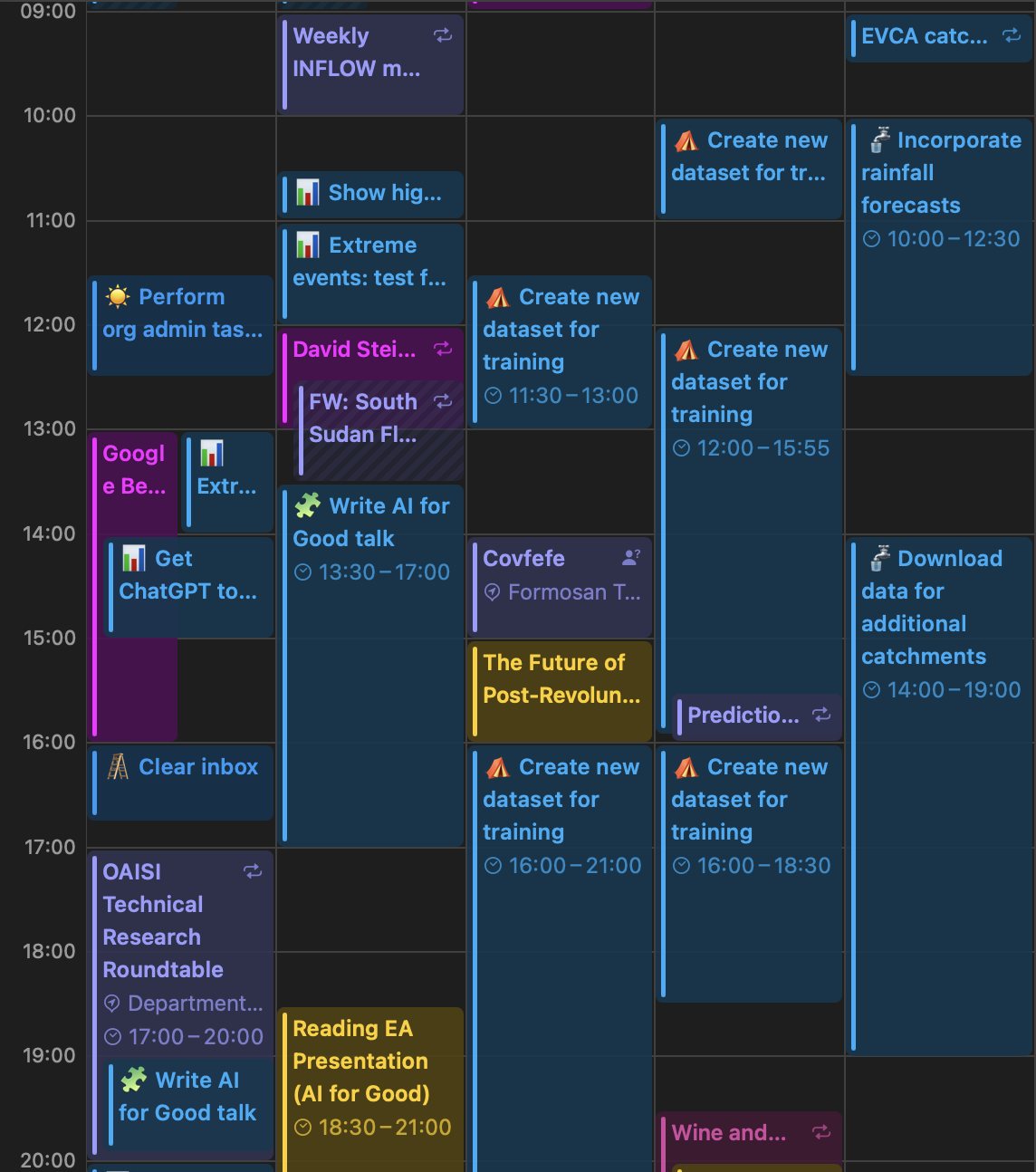

Note on Life Organisation

Since my last post, I have been actively working to improve my time management and allow myself to fully focus/deep dive into projects, instead of jumping back and forth between everything while making minor improvements. Partly inspired by Zhi-Yi’s reflections on her Oxford fellowship, I’ve been “time blocking”, which is basically scheduling time slots in advance that are dedicated to working on a particular thing. It seems really boring and overly bureaucratic, but is pretty effective for forcing me to plan my time more reasonably and move on from something if it is taking more time than expected.

Also useful for tracking how much time I’m spending on a given thing.

Also useful for tracking how much time I’m spending on a given thing.

Some Presentations

I was asked to give a few presentations this month. The first was at the University of Reading, where I got to talk to undergrad computer science and maths students about careers in AI for Good. This is not something I do very often, and I really enjoyed it and ended up spending almost an hour after the presentation just talking to the students and answering their questions.

What stood out to me the most was presenting some work I’ve done on automating jurisdictional scans using web scraping/LLMs. I mentioned off-handedly that the tool has fairly substantial limitations (it is not an oracle), but performs at roughly the level of a junior analyst – effectively automating their role for this project. Which brought up the uncomfortable question for the baby undergrads I was presenting to: with the existence of tools that can perform pretty decently the job of a new graduate with little work experience, what kind of jobs will be left for these guys when the graduate?

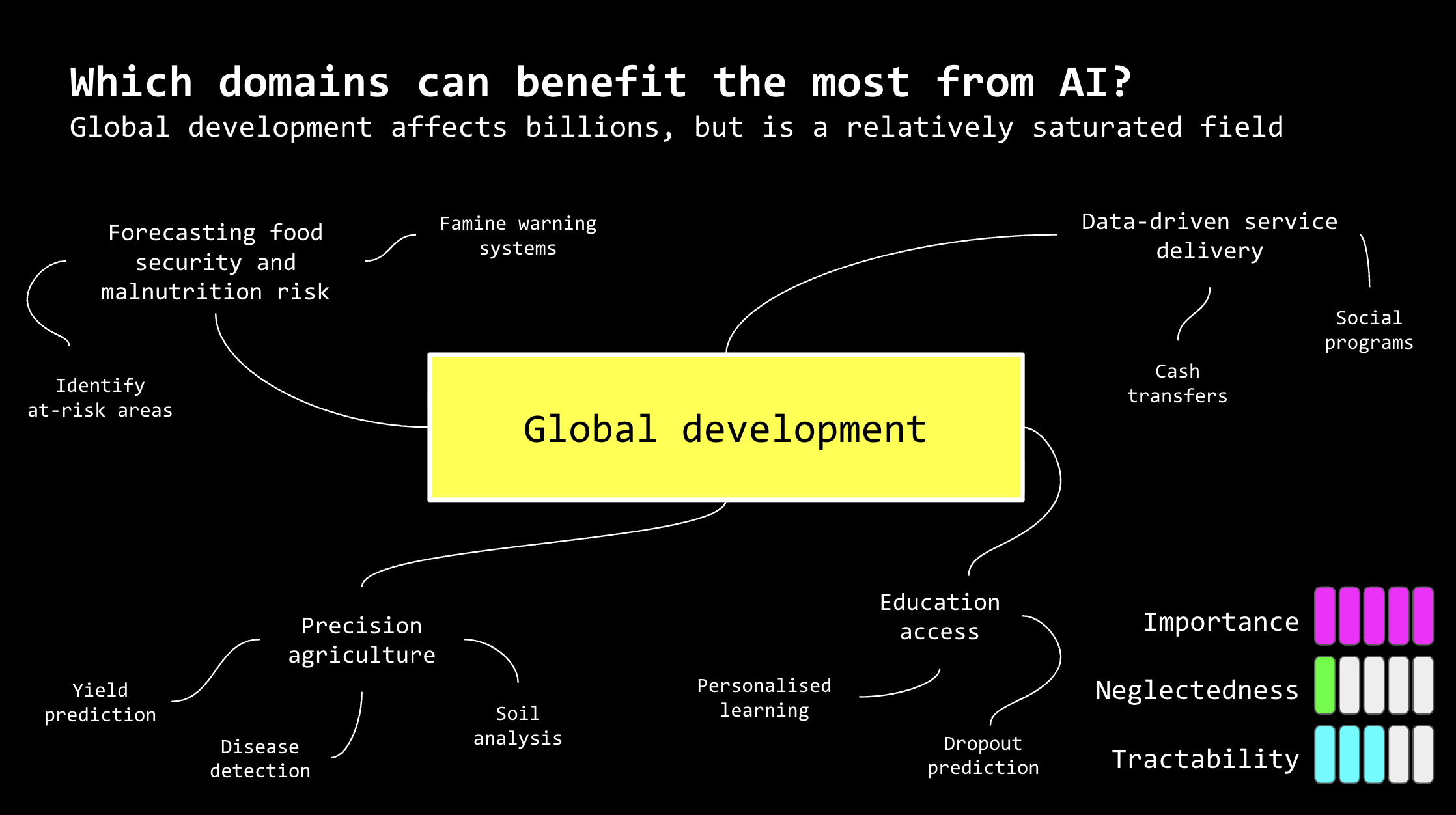

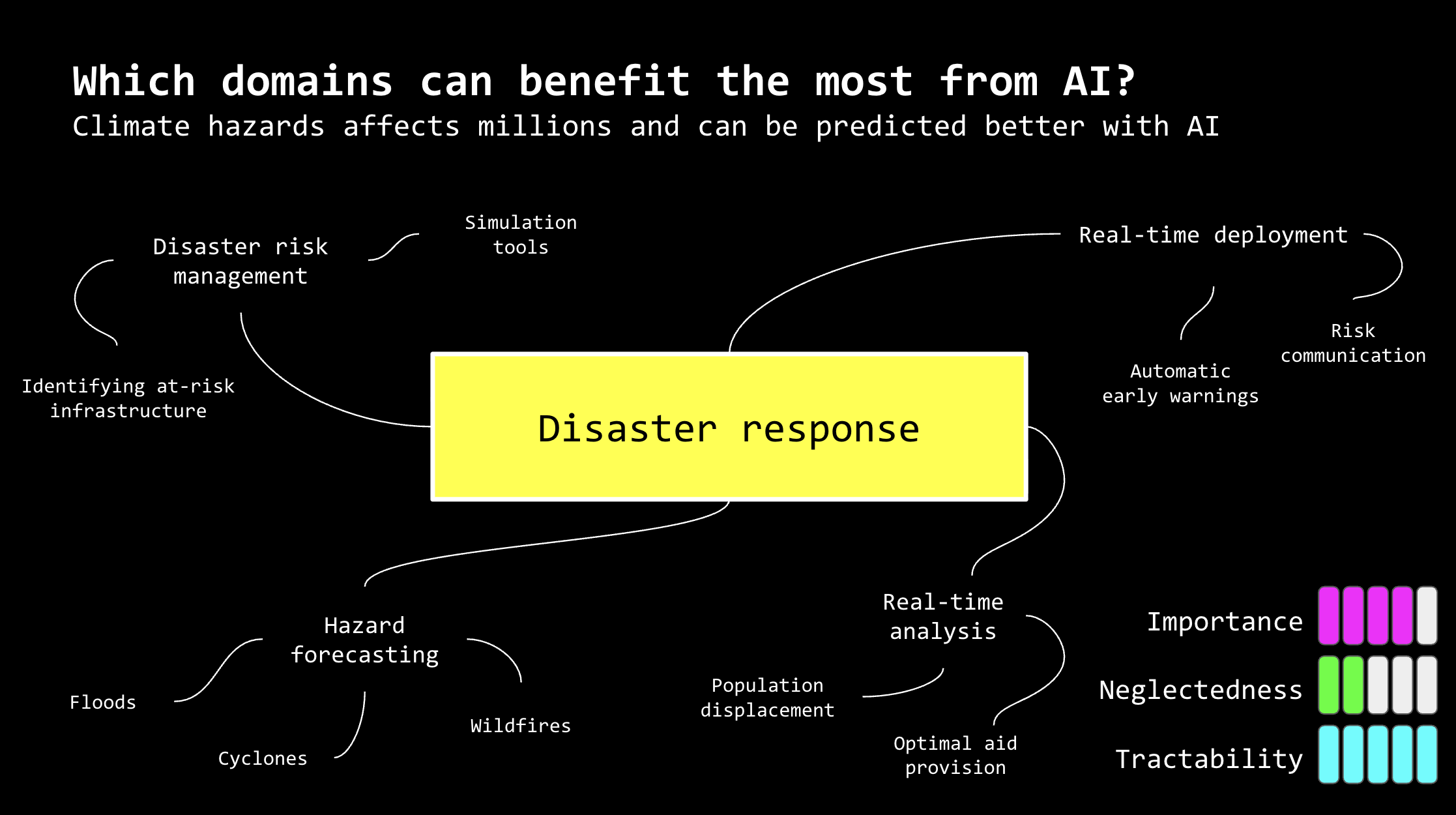

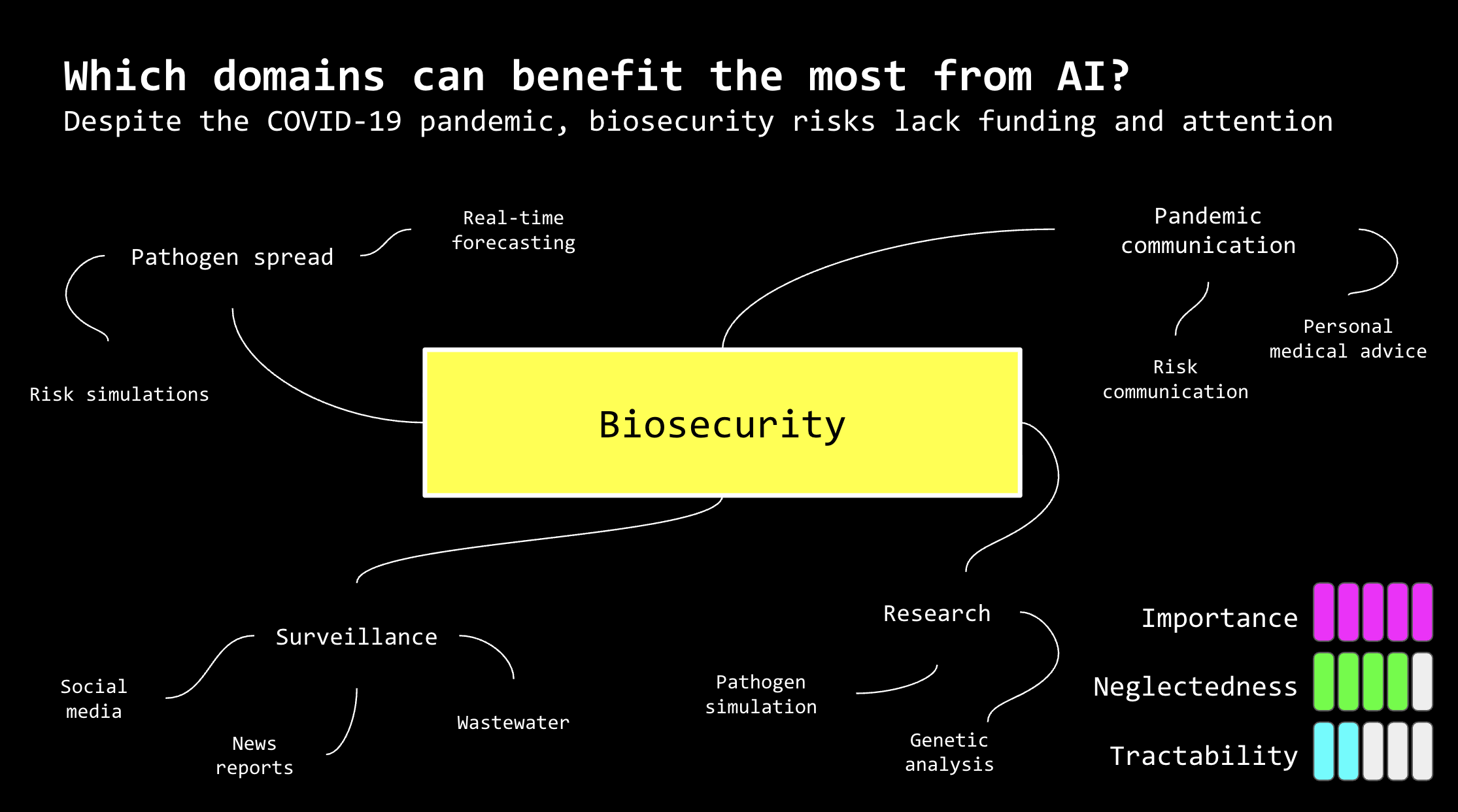

Domains that can benefit from AI according to me:.

Domains that can benefit from AI according to me:.

Domains that can benefit from AI according to me:.

Domains that can benefit from AI according to me:.

Domains that can benefit from AI according to me:.

Domains that can benefit from AI according to me:.

I think this concern is especially relevant for programmers, since so much of what junior devs do can be replaced with Claude or whatever. As an employer, I’d also be worried about the quality of programmers graduating now since they have learned to code in the world of AI, and maybe have insufficient fundamental skills to review LLM outputs critically (though maybe this is Boomer-minded of me and they are still fine).

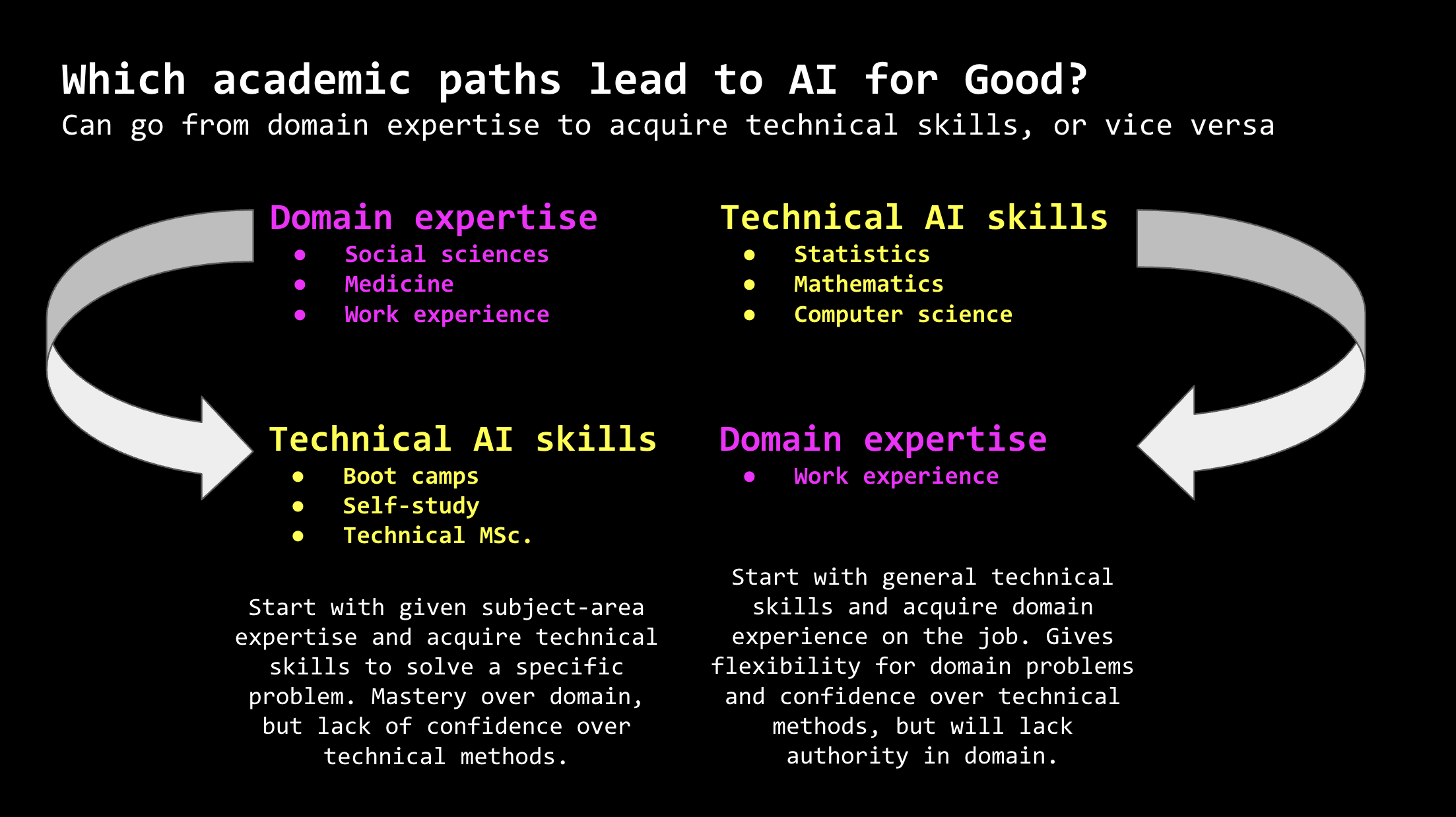

Different pathways to get into AI for good (I do think there is a path if you have strong domain knowledge, but limited technical skills).

Different pathways to get into AI for good (I do think there is a path if you have strong domain knowledge, but limited technical skills).

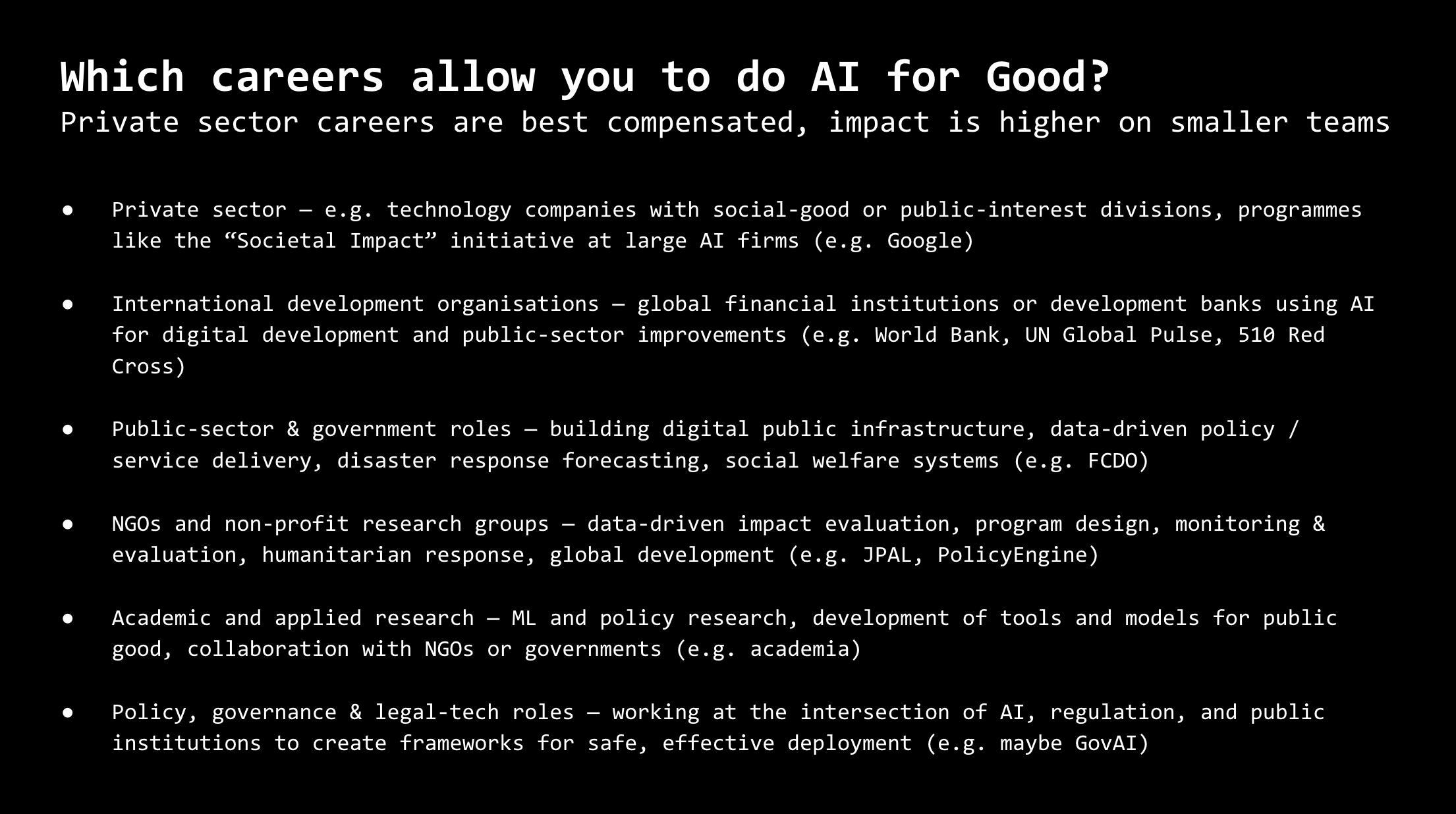

Some actual AI for Good jobs I came with off the top of my head, or apply to work with me!.

Some actual AI for Good jobs I came with off the top of my head, or apply to work with me!.

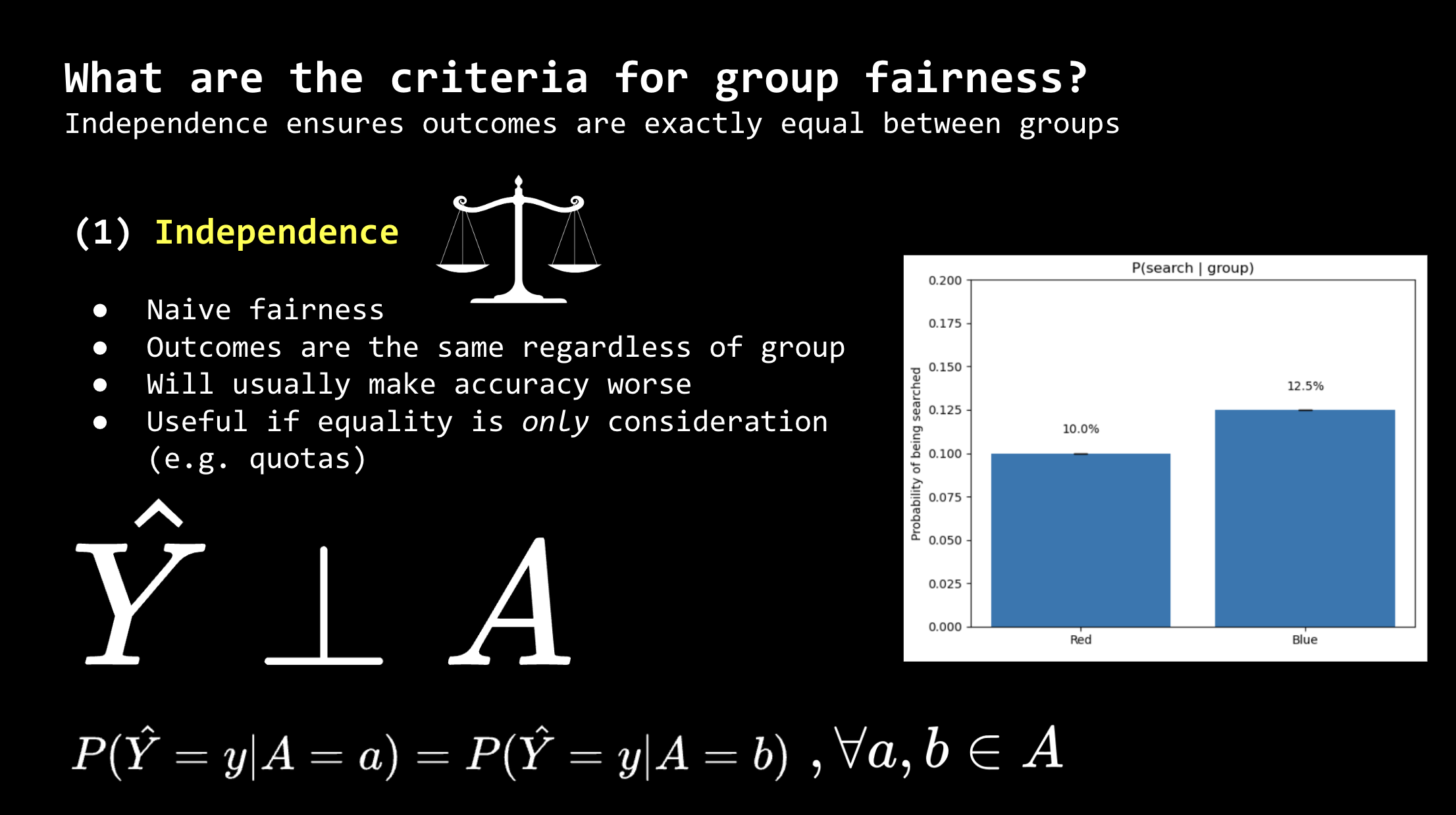

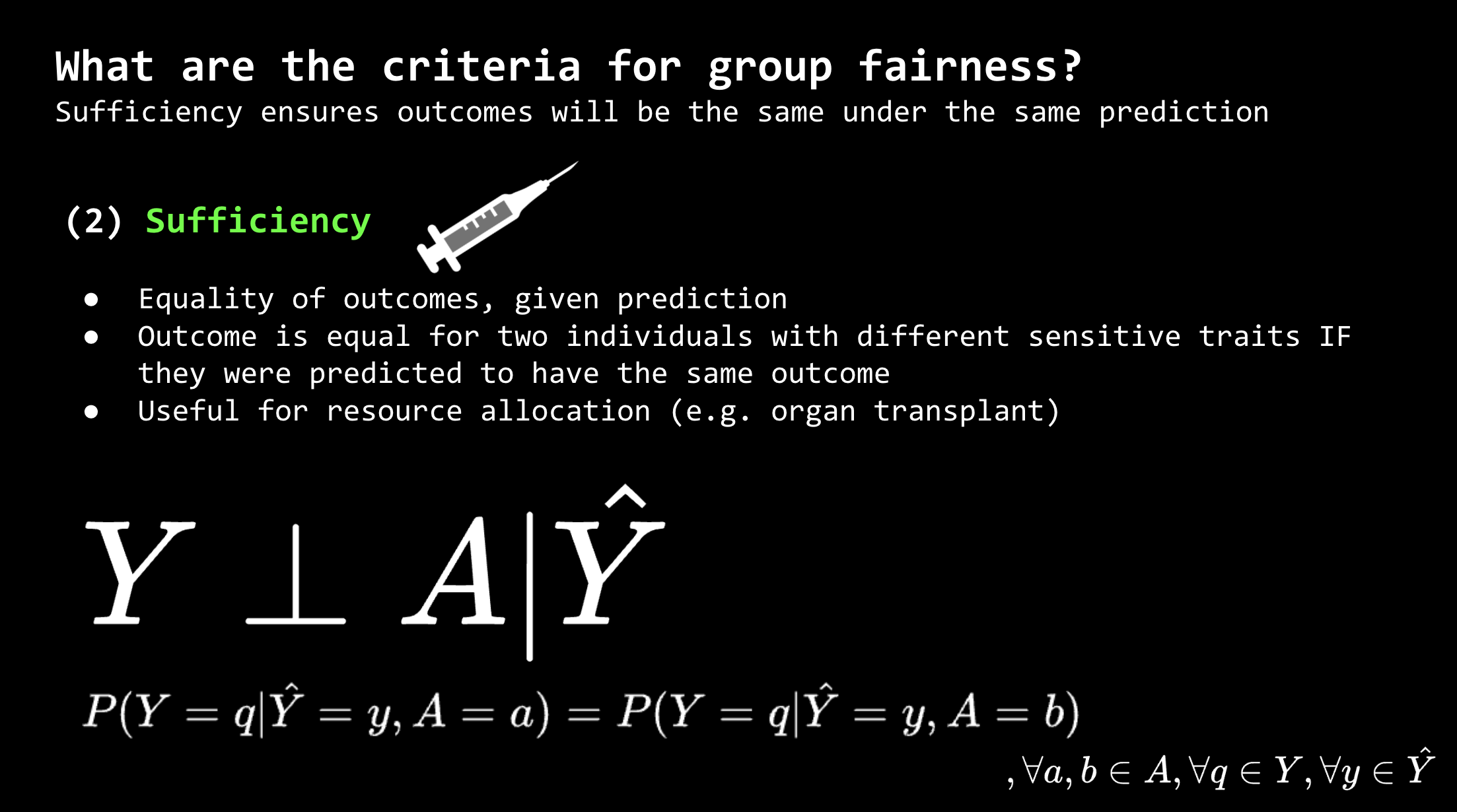

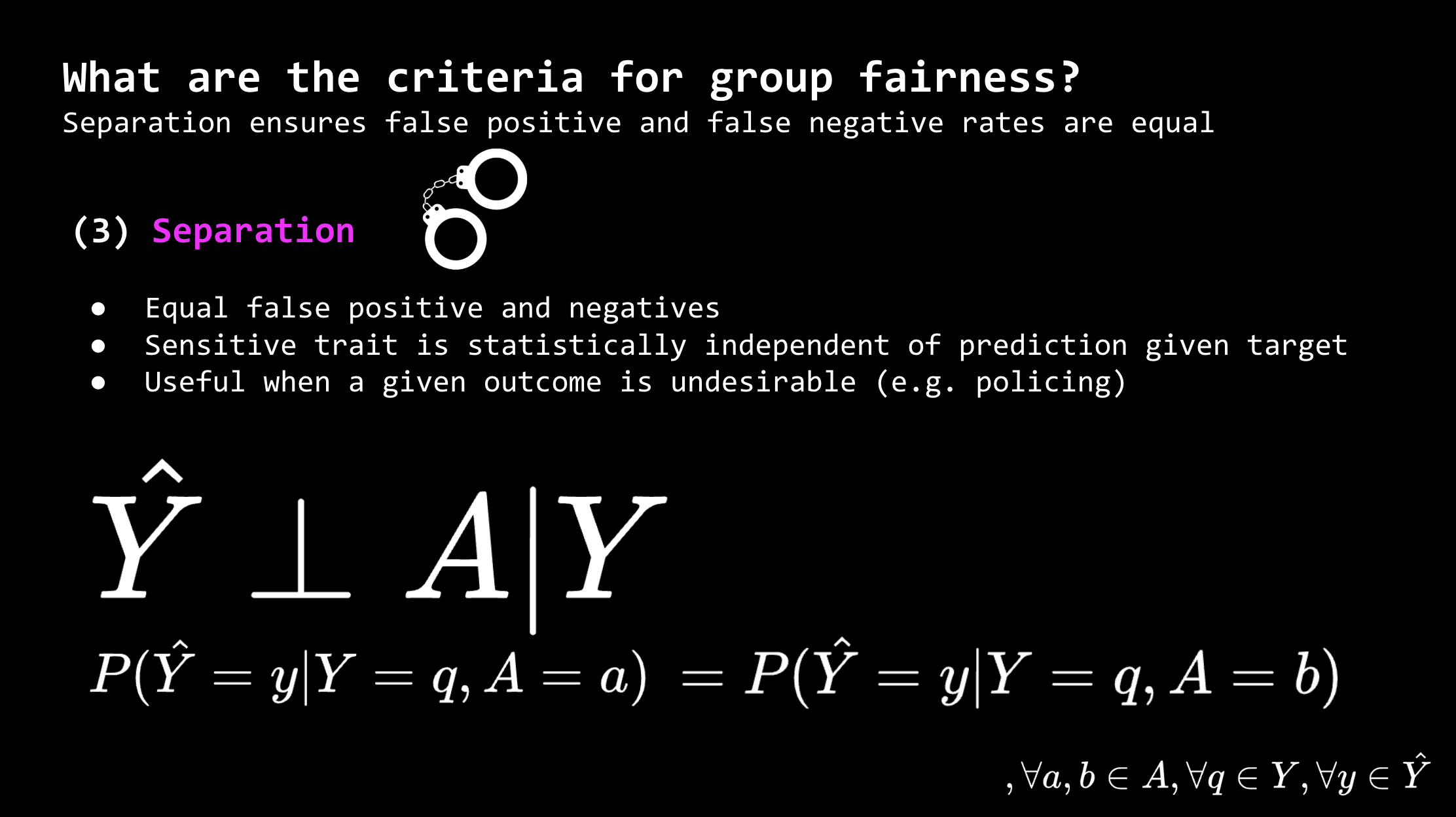

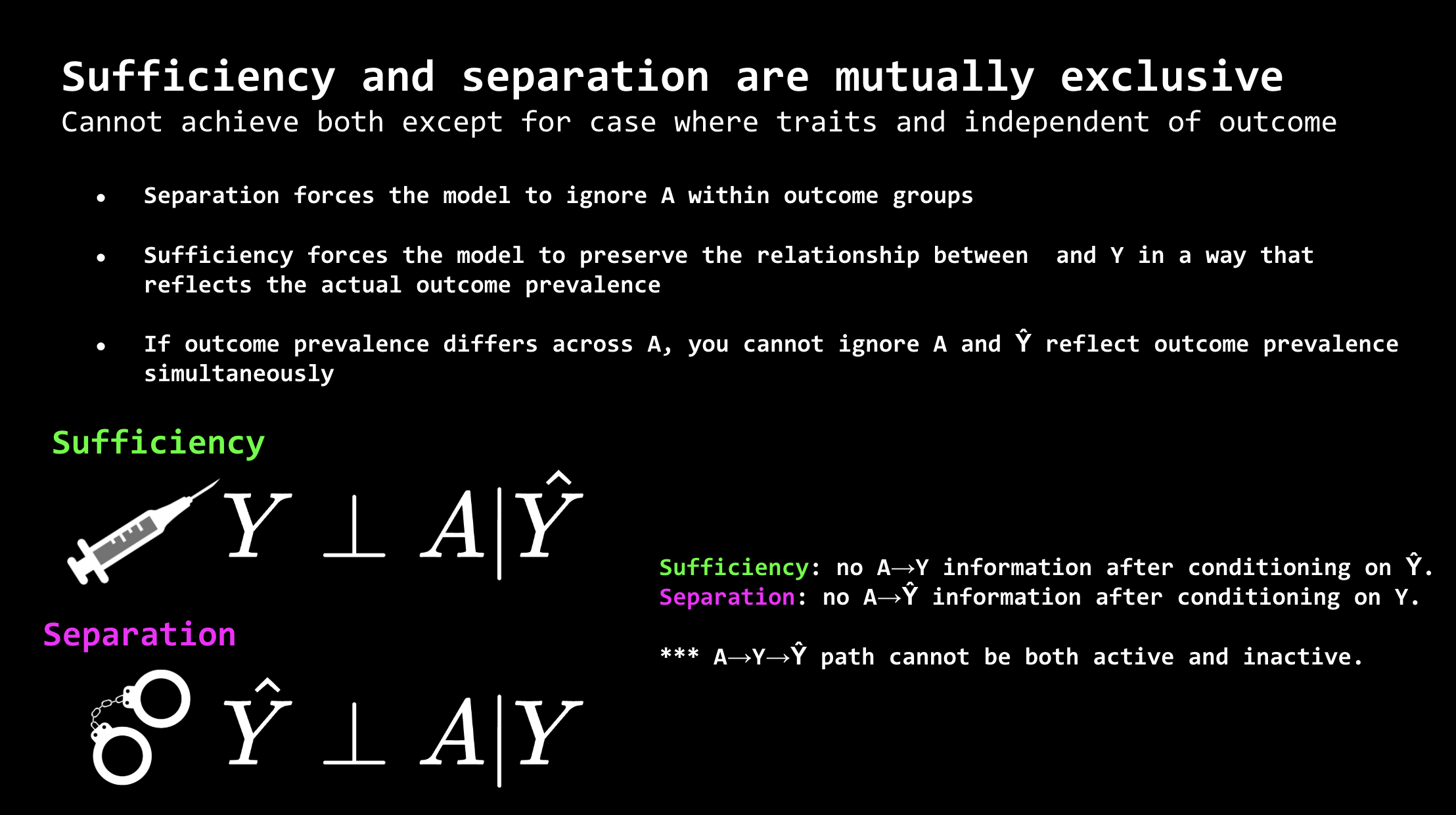

Also presented at the Oxford AI Safety Initiative (OAISI) Research Roundtable, on fairness in machine learning. It’s a fairly technical topic mainly centred around creating well-defined definitions of fairness, quantifying whether models achieve those criterion, and explaining why certain critereon can sometimes be mutually exclusive.

For example, when using AI for policing as is being pursued in England, fairness in how these algorithms treat different ethnicities is essential for equality and public trust.

Independence is when all outcome are predicted to be the same regardless of group. Not very useful if you want your algorithm to have actual predictive value, and outcomes are not actually equal between groups.

Independence is when all outcome are predicted to be the same regardless of group. Not very useful if you want your algorithm to have actual predictive value, and outcomes are not actually equal between groups.

Sufficiency is when outcomes are equal for every group given that they are predicted to have the same outcome. For example, if a model predicts someone of a certain ethnicity will commit a crime, the actual probability they will do so is the same for all other ethnicities that were predicted to commit a crime.

Sufficiency is when outcomes are equal for every group given that they are predicted to have the same outcome. For example, if a model predicts someone of a certain ethnicity will commit a crime, the actual probability they will do so is the same for all other ethnicities that were predicted to commit a crime.

Separation is when the false positives and false negatives from a model’s predictions are the same across all sensitive groups. This is normally the preferred standard for policing.

Separation is when the false positives and false negatives from a model’s predictions are the same across all sensitive groups. This is normally the preferred standard for policing.

It is mathematically impossible to have both sufficiency (equal outcomes) and separation (equal errors) at the same time, which is critical for engineers working in applications of machine learning to social problems to understand.

It is mathematically impossible to have both sufficiency (equal outcomes) and separation (equal errors) at the same time, which is critical for engineers working in applications of machine learning to social problems to understand.

I’m not sure how aligned the presentation is with the topics usually discussed at these Roundtables, since they usually focus pretty exclusively on LLMs and proposing various solutions to monitor their outputs (often quite engineery, e.g. having another LLM review the outputs). I’m quite skeptical that most of the topics I’ve seen presented here are going anywhere. The only intervention that I think had a plausible theory of change was steering vectors, which have their own limitations.

Regardless, diving a bit more into the topic of machine learning fairness gave me a chance to understand it a bit better, considering it isn’t really my field.

Winter Break

Enjoying the winter fog along the river.

Enjoying the winter fog along the river.

Also finished a few more drawings this month. And got a bit into acrylic painting with Alec over the break.

This statue I drew at the Ashmolean looks somewhat competent.

This statue I drew at the Ashmolean looks somewhat competent.

Deranged Snowman: my first acrylic painting.

Deranged Snowman: my first acrylic painting.

Some better paintings we did, mine are on the bottom.

Some better paintings we did, mine are on the bottom.

I will be in Canada from the end of the month until partway into January. There is currently a large amount of snow (over a metre) where my parents live.

It’s scarier than it looks, I promise.